The Evolution of Artificial Intelligence: From Myths to Multimodal Agents

The evolution of artificial intelligence is a story that stretches from ancient myths to the cutting-edge multimodal agents reshaping our world today. This article provides a comprehensive overview of the history, key milestones, and future directions of AI, making it an essential read for professionals, students, and anyone interested in understanding how AI is transforming society. By tracing the evolution of artificial intelligence-from its mythological roots and foundational breakthroughs to today’s generative and agentic systems-readers will gain the context needed to navigate current and future technological changes with confidence.

Understanding the evolution of artificial intelligence is crucial for anyone seeking to make informed decisions in a rapidly changing landscape. Whether you are a business leader, a technology professional, a student, or simply curious about AI, knowing the history and trajectory of AI helps you anticipate opportunities, manage risks, and adapt to the profound shifts AI is bringing to every industry.

What Is Artificial Intelligence?

Artificial intelligence (AI) refers to the capability of machines to perform tasks that typically require human intelligence, such as reasoning, learning, problem-solving, perception, and language understanding. The term "artificial intelligence" was first coined in a workshop proposal submitted by John McCarthy in 1955, and the field of AI research was founded at a workshop held on the campus of Dartmouth College in 1956. Since then, AI has evolved through several distinct eras, each marked by unique approaches and breakthroughs.

The Four Major Eras of AI

The societal impact of AI is categorized into four major eras:

Rule-Based & Expert Systems (1950s–1990s): Early AI focused on symbolic reasoning and hand-coded rules, leading to expert systems that could solve specific problems.

Machine & Deep Learning (2000s–2010s): The rise of statistical methods, big data, and neural networks enabled machines to learn from data, powering advances in image and speech recognition.

Generative AI (Early 2020s): AI systems began generating text, images, audio, and video at near-human quality, with models like GPT-4 and DALL-E.

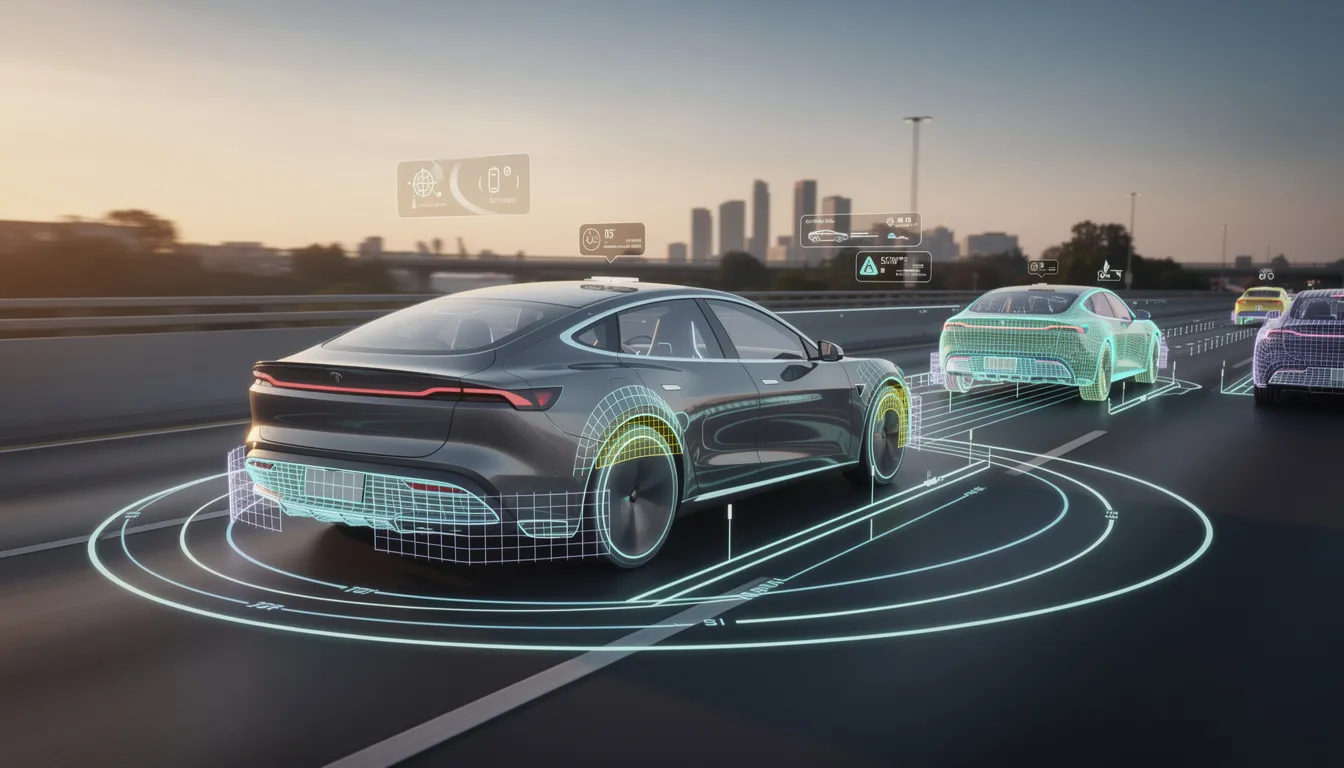

Autonomous Agentic & Physical AI (2025–2026+): The next wave will see AI systems acting autonomously in the physical and digital world, planning and executing complex tasks with minimal human intervention.

ANI vs. AGI: Understanding the Difference

Artificial Narrow Intelligence (ANI): Current AI systems are classified as ANI, designed for specific tasks such as language translation, image recognition, or playing chess. They excel in narrow domains but lack general reasoning abilities.

Artificial General Intelligence (AGI): AGI refers to future AI systems that can perform any intellectual task a human can, demonstrating flexible, general-purpose intelligence across domains.

Key Takeaways

The evolution of artificial intelligence spans from ancient myths like Talos and the Golem through the 1956 Dartmouth Conference that formally birthed AI as an academic discipline, to today’s transformer-based large language models like GPT-4 and Gemini 1.5.

AI history follows a cyclic pattern of booms and AI winter periods-the 1970s Lighthill report and late 1980s expert system crashes taught the field hard lessons about overpromising, shaping today’s more rigorous, data-driven AI research.

Since 2012, the combination of deep learning, big data, and GPU computing power has triggered unprecedented acceleration, with AlexNet’s ImageNet victory opening the floodgates for practical AI applications.

Today’s generative AI systems can produce text, images, audio, and video at near-human quality-ChatGPT reached 100 million users within two months of its November 2022 launch, while Sora generates 60-second video clips from text prompts.

Tracking this rapid evolution efficiently is crucial: KeepSanity AI delivers one weekly, ad-free briefing curated from top sources, helping professionals stay informed without drowning in daily hype.

From Ancient Dreams to Early Machines (Before 1940)

The earliest examples of artificial intelligence concepts didn’t emerge from computer science labs-they sprang from human imagination thousands of years before the first computer program existed. Ancient myths and mechanical inventions laid the conceptual groundwork for what would eventually become a formal scientific pursuit.

Mythological Precursors

Classical and medieval cultures imagined artificial beings long before anyone could build them:

Origin | Creation | Date | Concept |

|---|---|---|---|

Greek Mythology | Talos | ~8th century BCE | Bronze automaton forged by Hephaestus to patrol Crete autonomously |

Jewish Folklore | Golem | 16th century Prague | Clay figure animated through ritualistic incantations to perform labor |

Jonathan Swift | Lagado Engine | 1726 | Mechanical device in Gulliver’s Travels that algorithmically rearranged words to generate prose |

Swift’s satirical “engine” is particularly striking-it prefigured modern language models by imagining automated textual recombination without human authorship. These weren’t just science fiction fantasies; they represented humanity’s persistent question: could intelligent behavior be replicated in non-living matter?

From Myths to Mechanisms

The 18th and 19th centuries transformed these imaginative precursors into tangible mechanical ingenuity:

Jacques de Vaucanson’s Digesting Duck (1739): Simulated biological processes through cams and bellows, demonstrating that complex behaviors could be mechanically reproduced.

Charles Babbage’s Analytical Engine (1830s): Conceptualized programmable computation with punched cards, conditional branching, and looping.

Ada Lovelace’s 1843 notes on Babbage’s engine presciently outlined that such machines could enable composition beyond mere calculation-including music generation. She saw what others missed: programmable machines could manipulate symbols, not just numbers.

The First Autonomous Chess Machine

A landmark moment came in 1914 when Leonardo Torres y Quevedo built El Ajedrecista-an electromechanical chess device that autonomously played king-and-rook endgames against a human king. Using logic gates and electromagnetic sensors, it could:

Evaluate board positions without human intervention

Calculate moves to force checkmate

Demonstrate genuine autonomous decision-making

This wasn’t a trick or theatrical illusion. El Ajedrecista represented the first physical implementation of machine intelligence applied to problem solving-bridging myth to engineered systems that mechanized strategic reasoning.

These pre-1940 developments fostered a critical paradigm shift: reasoning itself could be recast as computable processes. This insight primed the formalization of computation and formal logic that would birth AI as a discipline.

Groundwork for AI: Logic, Computers, and Neural Ideas (1940–1956)

The 1940s and 1950s provided the mathematical and technological foundations that transformed artificial intelligence from philosophical speculation into a serious scientific goal. Three convergent streams-electronic computation, neural modeling, and operational definitions of intelligence-made AI possible.

The Electronic Computer Revolution

Early digital computers demonstrated that electronic switching could perform calculations at speeds impossible for mechanical or human computers:

Machine | Year | Innovation | Speed |

|---|---|---|---|

Atanasoff-Berry Computer | 1942 | First electronic digital computer using binary arithmetic | ~60 seconds per linear equation |

ENIAC | 1945 | 18,000 vacuum tubes, programmable via plugboards | 5,000 additions per second |

ENIAC’s ballistic trajectory calculations for the U.S. Army proved that electronic speed could tackle complex tasks previously requiring rooms full of human calculators. Modern computing had arrived.

Birth of Artificial Neural Networks

In 1943, Warren McCulloch and Walter Pitts published “A Logical Calculus of the Ideas Immanent in Nervous Activity.” Their formal neuron model introduced:

Binary threshold logic units simulating biological neurons

Inputs summed and “fired” if exceeding a threshold

Mathematical proof that networks of these units could compute any logical function

This paper connected neurophysiology to Boolean algebra, founding early neural networks and demonstrating that the human brain’s apparent complexity might reduce to implementable logic.

Turing’s Operational Definition

Alan Turing’s seminal 1950 paper “Computing Machinery and Intelligence” reframed the question “Can machines think?” into something testable. His imitation game-later called the Turing Test-proposed that a machine succeeds if indistinguishable from a human in text-based interrogation.

Turing predicted digital computers would rival human intelligence within 50 years. While that timeline proved optimistic, his operational approach gave researchers a concrete target rather than philosophical abstraction.

Converging Theoretical Streams

Complementary foundations emerged simultaneously:

Claude Shannon’s information theory (1948): Quantified data as bits and entropy, enabling the mathematics underlying machine learning algorithms.

Early game-theoretic analysis: Shannon and others demonstrated that strategic reasoning could be formalized.

These convergences crystallized at the 1956 Dartmouth Conference, organized by John McCarthy, Marvin Minsky, Nathaniel Rochester, and Claude Shannon. Their proposal coined “artificial intelligence” and estimated that a two-month workshop could outline paths to machines using human language, forming abstractions, and solving problems reserved for humans.

They were wildly optimistic about timelines. But they were right that the goal was achievable.

Birth and Early Optimism of AI (1956–1973)

The period from 1956 to 1973 was an era of bold promises. Computer systems solved algebra problems, played games, and manipulated symbols in ways that seemed genuinely intelligent. Many researchers predicted artificial general intelligence within a generation.

The Dartmouth Conference and AI’s Formal Birth

The 1956 Dartmouth Summer Research Project on Artificial Intelligence marks AI’s birth as an academic discipline. The organizers’ proposal declared:

“Every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it.”

This audacious claim-that machine intelligence could replicate human intelligence-set the tone for two decades of ambitious research.

Symbolic AI: Reasoning as Symbol Manipulation

Early AI programs embodied the “physical symbol system hypothesis”: intelligence equals symbol manipulation. Key achievements included:

Logic Theorist (1955-1956): Herbert Simon and Allen Newell proved 38 of 52 theorems from Principia Mathematica using heuristic search.

General Problem Solver (1959): Generalized means-ends analysis for arbitrary puzzles via recursive planning.

SHRDLU (1970): Terry Winograd manipulated virtual blocks through natural language processing commands like “pick up a big red block.”

These programs demonstrated that computer science could tackle knowledge representation and reasoning-not just calculation.

Early Neural and Learning Systems

Not all early research focused on symbolic approaches:

System | Year | Achievement |

|---|---|---|

Perceptron | 1958 | Frank Rosenblatt’s single-layer network learned linearly separable patterns through weight adjustments |

Checkers Program | 1959 | Arthur Samuel coined “machine learning”; his program improved through self-play and defeated its creator by 1962 |

These learning systems suggested machines could improve without explicit programming-a concept that would eventually dominate modern AI.

Conversational and Expert Pioneers

Early attempts at natural language interaction and domain expertise emerged:

ELIZA (1966): Joseph Weizenbaum simulated a psychotherapist through pattern-matching, transforming “I feel sad” into “Why do you feel sad?” Users often believed they were conversing with a human being-despite ELIZA lacking any genuine understanding.

DENDRAL (mid-1960s): Inferred molecular structures from mass spectrometry data using rule induction, birthing expert systems and domain-specific machine intelligence.

The Growing Gap

Optimism ran high. Marvin Minsky declared in a 1970 Life magazine interview that AI would achieve average human intelligence within three to eight years.

But hardware constraints loomed large. The PDP-1 had only 4K words of memory. Combinatorial explosion meant search spaces grew exponentially. Real-world problems resisted the tidy solutions that worked in constrained laboratory environments.

Minsky and Seymour Papert’s 1969 book Perceptrons exposed that single-layer networks couldn’t solve even simple problems like XOR. The gap between prediction and performance was becoming impossible to ignore.

First AI Winter and Critical Reassessment (1973–1980)

An AI winter is a period of waning funding, enthusiasm, and trust in AI promises. The first major winter began in the early 1970s when critical assessments exposed the field’s failures to deliver on grand predictions.

Triggering Reports

Lighthill Report (1973, UK): James Lighthill’s “Artificial Intelligence: A General Survey” lambasted AI’s failure to scale, triggering a 90% funding cut from the Science Research Council.

ALPAC Report (1966, delayed impact): Condemned machine translation as infeasible after $20 million in investment yielded poor results due to unresolved syntactic ambiguities.

Symbolic AI systems faltered on the “knowledge acquisition bottleneck”-hand-coding expertise proved impossibly labor-intensive. Chess search branched at 35 moves per position, overwhelming 1960s hardware. Robotics projects like SRI’s Shakey (1966-1972) required minutes for simple tasks that human beings perform instantly.

Surviving Research

Despite the downturn, important work continued in smaller circles:

Expert systems like MYCIN (1976) diagnosed bacterial infections with 69% accuracy rivaling physicians.

Robotics evolved through Shakey’s vision-language fusion.

Theoretical advances in logic programming via Prolog (1972) enabled declarative reasoning.

This winter forced the field to become more realistic and problem-focused. The painful reassessment prioritized narrow, evaluable tasks over grand generality-lessons that shaped the expert systems boom ahead.

Expert Systems, New Paradigms, and the Second AI Winter (1980–1999)

The 1980s saw commercial success with expert systems and government mega-projects, followed by another crash that redefined AI’s trajectory for a decade.

The Expert Systems Gold Rush

Rule-based expert systems captured domain knowledge from human experts and encoded it as if-then rules. Commercial success stories included:

System | Organization | Achievement |

|---|---|---|

MYCIN | Stanford | 450+ rules for bacterial infection diagnosis |

XCON | Digital Equipment Corp | Configured VAX computers, saving $40 million annually via 10,000 rules |

R1/XCON derivatives | Multiple | Spawned an entire industry of knowledge engineering |

Japan’s Fifth Generation Computer Systems project (1982-1992) invested $850 million aiming to build Prolog-based inference machines. Lisp machines from Symbolics peaked at $30,000 units for symbolic processing.

Corporate investment surged. AI seemed ready for prime time.

Alternative Paradigms Emerge

The 1980s weren’t just about expert systems. Alternative approaches gained traction:

Connectionism revived: Rumelhart and McClelland’s 1986 backpropagation work, training multilayer neural networks via gradient descent to solve problems like XOR that had defeated earlier networks.

Probabilistic reasoning: Judea Pearl’s 1988 Probabilistic Reasoning in Intelligent Systems introduced Bayesian networks for handling uncertainty via directed acyclic graphs.

Fuzzy logic: Found practical applications in control systems, powering consumer appliances and automotive braking systems.

These paradigms would eventually overtake symbolic AI, but that transition took decades.

Milestones of the Era

Notable achievements demonstrated AI’s expanding reach:

WABOT-2 (1980): A humanoid robot that played piano using computer vision and audition.

Carnegie Mellon’s Navlab (1984): Early autonomous vehicles traversing 20 miles via vision systems.

Apple’s Knowledge Navigator (1987): A concept video envisioning hypermedia virtual assistants-remarkably prescient about future AI capabilities.

The Second Crash

By the late 1980s, the expert systems boom collapsed:

Systems proved brittle-XCON required 100 person-years of maintenance.

Japan’s Fifth Generation underdelivered on its ambitious goals.

The 1987 Lisp machine crash wiped out approximately $2 billion in investments.

Knowledge acquisition remained a bottleneck; teaching machines domain expertise was exhausting.

Investors lost patience. Funding dried up. Another AI winter had arrived.

Mathematical Rigor and Narrow AI in the 1990s

The 1990s marked a fundamental shift from grand “human-level AI” visions to narrow, well-defined tasks with measurable performance. This wasn’t retreat-it was maturation.

The Statistical Turn

Machine learning emerged as a statistics-driven discipline. Key developments included:

Support vector machines (Vapnik, 1995) excelled at classification tasks.

Decision trees and ensemble methods complemented neural network approaches.

Rigorous evaluation on held-out test sets replaced hand-wavy demonstrations.

Foundational Architectures

Technologies that would later power the deep learning revolution emerged quietly:

LSTM networks (Hochreiter/Schmidhuber, 1997): Mitigated vanishing gradient problems for sequence modeling.

Convolutional neural networks: (LeCun’s work throughout the 1990s) achieved 90%+ accuracy on handwriting recognition with data mining approaches.

The Intelligent Agent Paradigm

AI reframed as systems that perceive, decide, and act to maximize performance in specific environments. This formulation-grounded in Markov decision processes and reinforcement learning-provided mathematical rigor that earlier symbolic approaches lacked.

Keeping AI Credible

Despite reduced public attention, significant milestones maintained visibility:

Deep Blue’s 1997 victory: Over world chess champion Garry Kasparov via brute-force search analyzing 200 million positions per second.

Dragon NaturallySpeaking (1997): Brought speech recognition to consumers.

Search engines and recommendation systems quietly deployed AI techniques at scale.

This increased rigor laid groundwork for the revolution that would follow.

Big Data, Deep Learning, and the Road to Generative AI (2000–2016)

The 2000s through mid-2010s transformed AI from a niche research field into practical technology enabled by the web, GPUs, and massive datasets. This was the AI boom that never fully busted.

The GPU Computing Revolution

Around 2009, researchers including Andrew Ng demonstrated that training neural networks on NVIDIA GPUs achieved 70x speedups compared to CPUs. Suddenly, experiments that would have taken months completed in days.

This wasn’t just faster-it was transformative. Networks could scale from 2 layers to 9, from thousands of neurons to millions.

The Data Explosion

Web-scale data provided fuel for statistical patterns recognition:

ImageNet (2007): Assembled 14 million labeled images across thousands of categories.

Speech and text corpora from the web enabled language translation at unprecedented scale.

Social media generated petabytes of human-generated content for training.

Breakthrough Results

2012 marked a watershed year. AlexNet, developed by Krizhevsky, Sutskever, and Hinton, won the ImageNet competition by slashing error rates from 25% to 15.3%. The winning formula:

8-layer convolutional neural network

ReLU activations instead of sigmoids

Dropout for regularization

GPU training

That same year, Google’s unsupervised “cat detector” learned to identify felines from YouTube videos without labeled training data-demonstrating that deep learning techniques could discover complex patterns autonomously.

Landmark Applications

AI entered everyday human life through visible applications:

Application | Year | Achievement |

|---|---|---|

IBM Watson | 2011 | Won Jeopardy! by ingesting 200 million pages for confidence-weighted answers |

Apple Siri | 2011 | Fused natural language processing and knowledge graphs into consumer phones |

Mars Rovers | 2004+ | Employed autonomous hazard navigation using A* pathfinding |

Social Robots | 1990s-2000s | MIT’s Kismet and consumer products like Furby demonstrated human emotions recognition |

These weren’t laboratory curiosities. They were products millions of human beings interacted with daily.

AlphaGo: Deep Reinforcement Learning’s Triumph

DeepMind’s AlphaGo defeating world Go champion Lee Sedol 4-1 in March 2016 signaled that deep reinforcement learning could tackle strategic complexity once thought impossible for computer systems.

Go’s search space (~10^170 possible positions) dwarfed chess. Brute force couldn’t work. Instead, AlphaGo combined:

Policy and value networks trained on expert games

Monte Carlo tree search for lookahead

Self-play generating 1.6 million training games

Distributed computing across 1,920 CPUs and 280 GPUs

This wasn’t just gaming-it demonstrated AI’s ability to master domains requiring intuition and pattern recognition, not just calculation.

Shifting Toward Artificial General Intelligence (AGI) Concerns

As deep learning scaled, researchers and the public revived concerns about AGI-intelligent systems that could match or exceed human versatility across all domains.

Defining AGI and ANI

Artificial Narrow Intelligence (ANI): Current AI systems are classified as ANI, designed for specific tasks.

Artificial General Intelligence (AGI): AGI refers to future AI systems that can perform any intellectual task a human can.

Early Warning Signals

I.J. Good’s 1965 “intelligence explosion” concept-recursive self-improvement leading to superintelligence-gained renewed attention. If AI could improve AI, acceleration might become exponential.

Safety-Focused Organizations

DeepMind (founded 2010): Explicitly pursued AGI while emphasizing safety research.

OpenAI (founded 2015): Stated goals of developing safe, beneficial AGI.

These weren’t fringe concerns. Major scientific research institutions and well-funded labs took existential risk seriously alongside capability research.

This period’s successes and emerging fears set the stage for explosive interest in large language models and generative AI that would arrive from 2017 onward.

Transformers, Large Language Models, and Generative AI (2017–2024)

The 2017 transformer architecture unlocked today’s wave of generative AI: AI systems that can write, code, draw, speak, and generate video at near-human quality. This represents the most visible AI acceleration in history.

Attention Is All You Need

The 2017 paper “Attention Is All You Need” by Vaswani and colleagues at Google replaced recurrent architectures with self-attention mechanisms. Key innovations:

Parallelizable training across entire sequences

Multi-head attention capturing different relationship types

Positional encodings preserving sequence information

Transformers achieved state-of-the-art on language translation and proved far more scalable than previous approaches.

The Large Language Model Era

Transformers enabled training on unprecedented scales:

Model | Year | Parameters | Training Data |

|---|---|---|---|

GPT-2 | 2019 | 1.5 billion | WebText |

GPT-3 | 2020 | 175 billion | 45TB of text |

GPT-4 | 2023 | Undisclosed | Multimodal training |

Gemini 1.5 | 2024 | Undisclosed | 1M+ token context |

GPT-3’s 2020 release demonstrated zero-shot learning-performing tasks from descriptions alone, without task-specific training. This “in-context learning” suggested general capabilities emerging from scale.

ChatGPT: AI Goes Mainstream

OpenAI’s November 2022 launch of ChatGPT-a conversational interface to GPT-3.5-represented a landmark moment. Within two months, it reached over 100 million users, becoming the fastest-growing consumer application in history.

For the first time, the average human could interact with a system that could:

Answer questions across virtually any domain

Assist with writing code and debugging

Draft emails, essays, and creative content

Translate languages in context

The AI chatbot had arrived as a mass-market product.

Multimodal Expansion

Generative AI rapidly expanded beyond text:

DALL-E (2021, 2023): Text-to-image generation using CLIP and diffusion models.

Stable Diffusion (2022): Open-source image recognition and generation enabling 512x512 images in seconds.

Sora (2024): Text-to-video model generating up to 60-second clips of realistic scenes.

Voice synthesis and cloning: Near-perfect replication of human speech patterns.

Today’s generative AI doesn’t just process information-it creates new content across modalities.

Practical Applications Multiply

Commercial deployment accelerated:

GitHub Copilot: Boosted developer productivity by 55% according to internal studies, fundamentally changing writing code workflows.

Microsoft Copilot: Integrated AI across Office applications.

Tesla FSD v12 (2024): Represented end-to-end neural network autonomous vehicles control.

Protein structure prediction: Through AlphaFold2 and AlphaFold3 accelerated drug discovery 10x.

Economic projections estimate AI could add $15.7 trillion to global GDP by 2030 (PwC). The stakes have never been higher.

Ethics, Alignment, and Regulation in the Generative Era

Modern AI’s power has made safety, bias, and control central to every conversation about deployment. These aren’t theoretical concerns-they’re daily operational challenges.

Common Issues

Training data bias: Models trained on internet text inherit societal biases; approximately 80% of training data is English.

Hallucinations: Current models generate false information 15-30% of the time, presenting fabrications as fact.

Privacy concerns: Training on personal data raises consent questions.

Intellectual property disputes: Lawsuits like NYT vs. OpenAI test copyright boundaries.

Misuse potential: Disinformation, fraud, and cyberattack facilitation.

The Alignment Problem

Ensuring AI systems pursue goals compatible with human values represents perhaps the central technical challenge. Approaches include:

Reinforcement learning from human feedback (RLHF)

Constitutional AI approaches

Red-teaming and adversarial testing

Interpretability research

Emerging Governance

Regulatory responses are materializing:

EU AI Act (2024): Tiers AI applications by risk level, prohibiting certain manipulative uses.

US Executive Orders: Mandate safety testing for powerful models.

Voluntary commitments from major labs on testing and transparency.

The tension between rapid innovation and responsible data governance will define AI deployment for years to come.

Where AI Evolution Is Heading Next

Predicting AI’s future is risky-the field has humbled forecasters repeatedly. But certain trajectories are visible from current research and investment patterns.

Agentic AI

The next frontier moves beyond content generation to action. Agentic AI systems:

Plan multi-step workflows autonomously

Execute tasks via APIs and tool use

Coordinate across systems with minimal human intervention

Handle exceptions and adapt to feedback

Early examples like Auto-GPT chain LLM calls to accomplish goals that would have required hours of human work. These AI agents represent a fundamental shift from answering questions to accomplishing objectives.

Efficiency and Specialization

Not all progress requires trillion-parameter models:

Smaller capable models: Phi-3 mini achieves strong performance with 3.8 billion parameters through distillation.

On-device AI: Quantization enables Llama 3.2 to run on phones.

Domain specialists: AlphaFold3 (2024) predicts biomolecular interactions with stunning accuracy for scientific research.

The future likely involves a spectrum from massive frontier models to efficient specialized systems.

Multimodal Context-Rich Systems

Boundaries between modalities are dissolving:

Gemini 1.5’s 1M+ token context window processes entire codebases or book-length documents.

Vision-language-action models (RT-2) enable robots to follow natural language instructions.

Sensor fusion combines camera, lidar, and other inputs for autonomous systems.

These intelligent systems blur lines between perception, reasoning, and action in ways that increasingly mirror human cognition.

Governance and Standards

Alongside technical evolution, expect growing emphasis on:

Watermarking (e.g., Google’s SynthID) for synthetic content identification.

Red-teaming requirements for model releases.

Audit frameworks for high-stakes deployments.

Industry standards for data-driven decision making with AI.

Investment in AI now exceeds $100 billion annually. The stakes demand mature governance.

Staying Informed Without Drowning

The pace of change makes it nearly impossible to track every development personally. Yet staying informed is essential for career relevance, business strategy, and informed citizenship.

This is precisely why KeepSanity AI exists: one weekly, carefully curated email capturing the real signal in AI’s evolution. No daily filler. Zero ads. Just the major developments that actually matter-model releases, regulatory moves, business-relevant trends-so you can adapt your strategies without sacrificing your focus.

The noise is gone. Here is your signal.

FAQ

How is today’s generative AI different from earlier expert systems?

The difference is fundamental. 1980s expert systems like MYCIN relied on hand-coded if-then rules-human experts literally dictated the logic (“if fever > 101°F and white blood cell count elevated, then consider bacterial infection”). These systems achieved narrow competence (MYCIN hit 69% diagnostic accuracy) but were brittle and couldn’t generalize beyond their programmed rules.

Modern generative AI like GPT-4 learns statistical patterns from trillions of tokens through gradient descent and backpropagation. No human explicitly programs the rules-the network discovers them from data. This enables flexibility across tasks (translation, coding, analysis) that expert systems couldn’t touch. However, LLMs lack interpretable rules and can hallucinate confidently, creating different reliability challenges.

Does the rapid evolution of AI mean Artificial General Intelligence is close?

Opinions diverge sharply among experts. Some researchers view current LLMs as early steps toward AGI, pointing to emergent capabilities that weren’t explicitly trained. Others, like Yann LeCun, argue these systems are sophisticated “autocompletion engines” still lacking robust reasoning, causal understanding, and real-world grounding.

Current systems excel on benchmarks but struggle with reliability, long-term planning, and novel situations outside their training distribution. Performance on reasoning tests like ARC remains 30-50% versus human scores around 85%. Rather than fixating on AGI timelines, professionals should focus on managing the real, present-day impacts of increasingly capable narrow and generative AI.

What skills should professionals build to stay relevant as AI evolves?

The most resilient approach combines AI literacy with deep domain expertise:

AI fundamentals: Understanding prompting techniques, basic ML concepts, and model limitations.

Integration skills: Incorporating AI tools into workflows, validating outputs, and recognizing when AI assistance is appropriate.

Critical evaluation: Identifying hallucinations, bias, and inappropriate confidence.

Domain depth: AI amplifies expertise but can’t replace it; knowing your field deeply makes you a more effective AI user.

Following curated sources like KeepSanity AI helps track major shifts efficiently without the impossible task of monitoring daily developments across dozens of sources.

Why do “AI winters” happen, and could we see another one?

AI winters followed periods where promises vastly exceeded delivery. The 1970s winter hit after predictions of imminent human-level AI collided with hardware limitations and the knowledge acquisition bottleneck. The late 1980s crash came when expert systems proved expensive to maintain and Japan’s Fifth Generation project underdelivered.

A full winter is less likely now given AI’s clear commercial value-Fortune 500 adoption exceeds 30% and valuations top $200 billion. However, corrections are possible if current models hit fundamental scaling limits (some researchers already observe diminishing returns at the frontier), if regulatory constraints slow deployment, or if highly publicized failures erode trust.

Importantly, even during past winters, foundational research continued. AI evolution is more gradual and cumulative than boom-bust headlines suggest.

How can I keep up with AI’s evolution without wasting hours every day?

The pace since 2020 makes personally tracking every paper, model release, and product launch unrealistic. Major AI labs now ship updates faster than any individual can absorb.

The solution is curated, low-noise sources that filter for genuinely important developments:

Major model releases

Significant regulatory changes

Business-relevant trends

-not incremental updates designed to keep you clicking.

KeepSanity AI is purpose-built for exactly this problem: one concise, ad-free email per week capturing the real signal. Subscribed by teams at organizations like Bards.ai, Surfer, and Adobe, it delivers what matters without the daily inbox overwhelm. Lower your shoulders. The noise is gone.