Artificial Learning: How Machines Actually Learn in 2025

Introduction

Artificial learning is a subfield of artificial intelligence (AI) that encompasses the overarching ability of machines to learn, adapt, and mimic human cognitive processes. Artificial learning refers to the ability of a computer program to learn from large amounts of data and improve its performance over time without being explicitly programmed for every specific task. In 2025, understanding artificial learning is essential for professionals, students, executives, and anyone interested in how AI systems like ChatGPT, Gemini, and self-driving cars are transforming industries. This article provides a comprehensive overview of how artificial learning works, the foundational concepts, practical workflows, real-world applications, and the challenges and opportunities it presents. Whether you are a business leader seeking to leverage AI, a student exploring the field, or a technical professional aiming to deepen your knowledge, this guide will help you navigate the rapidly evolving landscape of artificial learning and its impact on society.

Key Takeaways

Artificial learning is the process by which systems like ChatGPT, Gemini, and self-driving cars learn from massive datasets rather than explicit programming rules.

Artificial learning is a subfield of artificial intelligence (AI) that encompasses the overarching ability of machines to learn, adapt, and mimic human cognitive processes. It refers to the ability of computer systems to improve their performance by learning from data and feedback rather than from hand-written rules.

Artificial learning is primarily achieved through machine learning (ML), which uses algorithms to analyze datasets, recognize patterns, and make decisions.

It’s implemented mainly through machine learning, deep learning, and reinforcement learning-each suited to different problem types and feedback structures.

The practical workflow follows a clear path:

Collect data

Train models (often on GPU/TPU clusters)

Evaluate performance

Deploy

Continuously update based on new data

Artificial learning now powers real-world systems in 2025 across healthcare, finance, manufacturing, and media, while raising concrete risks around bias, privacy, and energy use.

Staying informed without drowning in daily noise is critical-curated sources like KeepSanity AI deliver only the major developments each week.

What Is Artificial Learning?

Artificial learning is a subfield of artificial intelligence (AI) that encompasses the overarching ability of machines to learn, adapt, and mimic human cognitive processes. It refers to the ability of a computer program to learn from large amounts of data and improve its performance over time without being explicitly programmed for every specific task. Artificial learning is primarily achieved through machine learning (ML), which uses algorithms to analyze datasets, recognize patterns, and make decisions.

Key Definitions:

Artificial learning: A subfield of AI that refers to the ability of computer systems to improve their performance by learning from data and feedback rather than from hand-written rules. It encompasses the overarching ability of machines to learn, adapt, and mimic human cognitive processes, and is primarily achieved through machine learning.

Machine learning (ML): A subset of artificial intelligence that focuses specifically on enabling machines to learn from datasets without explicit programming. ML is a field of study in AI concerned with the development and study of statistical algorithms that can learn from data and generalize to unseen data.

Deep learning: A subset of machine learning that uses artificial neural networks with many layers to learn complex patterns in data, powering the most impressive AI demonstrations of the past decade.

In this article, we’ll cover the supervised learning, unsupervised learning, reinforcement learning, and generative approaches that underpin modern AI systems. These aren’t abstract concepts-they’re the engines driving the tools millions of people use every day.

Consider the 2023–2025 landscape:

Large language models like GPT-4 (released March 2023, with rumored 1.7 trillion parameters) and Google’s Gemini 1.5 Pro (announced February 2024, handling up to 1 million tokens of context)

Image generators like Midjourney v6 and DALL-E 3, producing photorealistic visuals from text prompts

Autonomous driving stacks from Tesla’s Full Self-Driving v12.5 (trained on billions of miles of fleet data) and Waymo’s sixth-generation robotaxi

All of them rely on artificial learning to perform tasks that would be impossible to encode through traditional programming.

The roots of this field trace back to the 1950s-Frank Rosenblatt’s perceptron in 1958, Alan Turing’s 1950 paper proposing learning machines. But modern artificial learning exploded after the 2012 AlexNet breakthrough on ImageNet, which slashed error rates from 26.2% to 15.3% using deep neural networks trained on GPUs. That moment ignited the deep learning revolution we’re still living through.

This article focuses on how machines learn from data in practice-not on speculative “strong AI” or consciousness.

The Foundations of Artificial Learning

Artificial learning is grounded in three interdependent components: data, models, and compute infrastructure. Understanding these foundations helps you evaluate any AI system’s capabilities and limitations.

Training Data

Training data consists of large collections of input-output pairs. For image recognition, that means photos tagged with labels like “cat” or “dog.” For large language models, it means text with next-word targets. For self-driving cars, it means sensor data paired with steering commands.

Industrial systems often use datasets with billions of examples:

Dataset | Size | Use Case | Collection Period |

|---|---|---|---|

Common Crawl | Petabytes of web text | LLM pretraining | 2016–2023 |

LAION-5B | 5.85 billion image-text pairs | Vision models | 2022 |

Tesla Fleet Data | 10+ billion miles annually | Autonomous driving | 2019–2024 |

Quality matters as much as quantity. Deduplication can reduce LAION from 5.85B to an effective 2-3B unique pairs. Filtering removes toxicity and data bias that could skew outputs.

Models

Models encode data relationships through parameters. The architecture you choose depends on your problem:

Decision trees and gradient-boosted ensembles (like XGBoost, LightGBM) excel on tabular data

Convolutional neural networks handle image tasks

Transformers dominate natural language processing and increasingly vision

Frontier models in 2024-2025 can reach hundreds of billions of parameters-GPT-3 had 175 billion, and rumored successor scales approach 1.8 trillion. These multilayered neural networks, sometimes called deep neural networks, learn complex patterns that simpler architectures miss.

Learning as Optimization

Learning manifests as parameter optimization. The system iteratively minimizes a loss function-a measure of prediction error-using techniques like:

Stochastic gradient descent (SGD) and variants like Adam optimizer

Backpropagation for computing gradients through neural network layers

Mini-batch processing that balances memory and convergence speed

Each training step nudges parameters slightly toward better predictions, repeated millions or billions of times.

Compute Infrastructure

Modern artificial learning requires specialized hardware:

NVIDIA GPUs (since ~2010) enabled parallel matrix operations that made deep learning practical

Google TPUs (since 2016) optimized tensor operations for neural networks-TPU v5p delivers 459 petaflops per pod

Custom accelerators like AWS Trainium2 provide 4x throughput improvements for LLM training

Training Llama 3’s 405B parameter model required 3.8 million H100-hours and approximately $100 million in compute costs. Distributed training across thousands of chips, using frameworks like PyTorch’s Fully Sharded Data Parallel, has become essential for frontier systems.

Artificial Learning vs. Machine Learning (and Other AI Terms)

Terminology in this field often blurs together, especially in media coverage since ~2022. Let’s establish clear distinctions that matter when evaluating capabilities, risks, or regulation.

Term | Definition | Example Applications |

|---|---|---|

Artificial learning | The high-level concept of machines exhibiting learning behavior from experience. Encompasses both symbolic and neural approaches to improvement through data exposure. | All data-driven AI systems |

Machine learning | A subset of artificial intelligence that focuses specifically on enabling machines to learn from datasets without explicit programming. Involves statistical and algorithmic methods like random forests, support vector machines, gradient boosting, and neural networks. | Fraud detection, recommendation |

Deep learning | A subset of machine learning using artificial neural networks with many layers. Powers the most impressive AI demonstrations of the past decade. | Vision, language, generative models |

Generative AI | Models that create new content-text, images, code, audio, video-using deep learning techniques. | ChatGPT, DALL-E, Stable Diffusion |

Here’s how these terms map to real applications:

Application | Primary Technique | Category |

|---|---|---|

Banking fraud detection | Gradient-boosted trees, isolation forests | Machine learning |

Netflix recommendations | Transformer embeddings on user behavior | Deep learning |

Midjourney image creation | Cascaded diffusion models | Generative AI |

All three fall under artificial learning. The hierarchy works like concentric circles: artificial intelligence encompasses the broadest set of approaches; machine learning focuses on data-driven pattern generalization; deep learning leverages multilayer neural architectures; generative AI applies these to content creation.

Being precise with terminology helps you evaluate vendor claims, understand regulatory requirements, and communicate clearly with technical teams.

Traditional machine learning methods like random forests often achieve 95%+ accuracy on structured tabular data where deep learning would be overkill. Meanwhile, deep learning models dominate unstructured data-vision and language tasks-where ImageNet error rates have dropped below 3% by 2025.

How Artificial Intelligence Learning Works in Practice

Understanding the AI and machine learning development lifecycle helps demystify what happens between “we have an idea” and “the system is live.” Here’s how machine learning systems move from raw data to deployed, continuously learning applications.

Data Collection

Every artificial learning project starts with data collected from diverse sources:

Application logs from websites and mobile apps capturing user behavior

Sensor feeds from IoT devices-Tesla’s fleet generates roughly 1 petabyte daily

Transaction records from banks and payment processors

Web crawls used for pretraining large language models (Common Crawl captured 3+ trillion tokens through 2023)

Data quality often determines project success more than model sophistication. Garbage in, garbage out remains the fundamental truth of machine learning operations.

Preprocessing and Labeling

Raw data rarely arrives ready for training. Preprocessing steps include:

Cleaning and deduplication using techniques like MinHash-LSH (often achieving 50% size reduction)

Normalization via z-score scaling to standardize feature ranges

Anonymization through k-anonymity or differential privacy to protect user data

Labeling via human annotators or weak supervision

Platforms like Scale AI have labeled over 1 billion tokens between 2020-2025, often at $0.01-0.10 per example. This human-in-the-loop process creates the ground-truth labels that supervised learning requires.

Training

With prepared data, teams select an architecture-ResNet for images, BERT-base (110M parameters) for text, transformer decoders for generation. The training process involves:

Weight initialization using methods like Xavier/Glorot to prevent vanishing gradients

Mini-batch gradient descent across GPU/TPU clusters

Monitoring validation performance to prevent overfitting

Meta’s 2024 disclosure revealed Llama 3’s 405B model trained on 15 trillion tokens-a training example of frontier-scale compute requirements.

Evaluation and Validation

Before deployment, machine learning engineers test models rigorously:

Metric | Use Case | Good Target |

|---|---|---|

Accuracy | General classification | Task-dependent |

F1 Score | Imbalanced classes | 0.9+ |

ROC-AUC | Binary classification | 0.95+ |

BLEU | Translation quality | 0.3+ |

Perplexity | Language model quality | Lower is better |

Cross-validation (typically 5-fold) reduces variance by 20% compared to single holdout sets. Stress-testing for bias and adversarial robustness catches problems before users encounter them.

Deployment

Production deployment involves several technical steps:

Model compression through 4-bit quantization (halving inference memory while retaining 99% accuracy)

Serving infrastructure via APIs or edge devices

Drift monitoring using statistical tests like Kolmogorov-Smirnov to detect when input data distributions shift

When user behavior or real world data patterns change-as happened dramatically post-2020 during the pandemic-systems must trigger retraining.

Continuous Learning

Modern AI applications don’t remain static. Continuous learning approaches include:

Online learning with incremental SGD updates

Periodic fine-tuning using LoRA adapters (10,000x cheaper than full retraining)

Reinforcement learning from human feedback (RLHF), used since 2022 on chatbots like InstructGPT to improve alignment through 30,000+ comparison pairs

This training process never truly ends for systems serving evolving user needs.

Types of Artificial Learning

Artificial learning techniques differ mainly in the kind of feedback available (labels, rewards, or none) and the structure of the problem. Understanding these machine learning techniques helps you match solutions to business challenges.

Supervised Learning: Uses labeled input-output pairs to teach models. The system learns to map inputs to correct outputs by analyzing data with known answers. Common algorithms include logistic regression, random forests, and deep neural networks. Applications: spam detection, medical image classification, credit scoring.

Unsupervised Learning: Works without labels, finding hidden patterns in data through clustering (e.g., k-means), dimensionality reduction (PCA, t-SNE, UMAP), and anomaly detection. Applications: customer segmentation, intrusion detection.

Reinforcement Learning: Models agents interacting with environments, receiving rewards that guide behavior. The system optimizes policies through trial-and-error. Applications: AlphaGo, robotic control, ad bidding systems.

Self-Supervised Learning: Predicts missing parts of data-masked tokens in text, hidden patches in images-dramatically reducing labeling requirements. Applications: large language models, vision transformers.

Genetic and Evolutionary Algorithms: Apply evolution-inspired optimization: populations of solutions undergo mutation and crossover, with the fittest surviving. Applications: hyperparameter optimization, architecture search, game strategies.

Applications of Artificial Learning Across Industries

From 2020–2025, artificial learning moved from pilots to core infrastructure across multiple sectors. These AI applications demonstrate the broad range of problems that data-driven systems can now address.

Healthcare

AI-assisted radiology now helps clinicians detect tumors on MRI and CT scans. Google’s LYNA system detected 9.3% more breast cancers at the same false-positive rate as human radiologists. FDA-cleared AI tools analyzed over 100 million scans by 2024.

AlphaFold 2 (2020) predicted over 90% of protein structures correctly-up from 60% previously-with 200+ million predictions now available through AlphaFold DB. This accelerates drug discovery by revealing how proteins fold from their amino acid sequences, helping researchers analyze complex data at unprecedented speed.

Transportation

Self-driving cars use sensor fusion, perception networks, and reinforcement learning for planning. Waymo’s robotaxis have logged 20+ million autonomous miles in Phoenix and San Francisco by 2024, achieving safety records 99.999% better than human drivers according to NHTSA benchmarks.

Tesla’s FSD v12.5 (2024) shifted to end-to-end neural networks trained entirely on video from the fleet-no explicit rules for traffic scenarios. The system learns to recognize patterns in driving situations directly from billions of miles of human examples.

Finance and Banking

Fraud detection uses anomaly detection on transaction graphs. PayPal’s graph neural networks flag over $1 billion in fraudulent transactions yearly by identifying patterns humans would miss in the input data.

Algorithmic trading applies reinforcement learning-JPMorgan’s LOXM system (2017) reduced execution slippage by 20%. Credit scoring models using machine learning methods now evaluate applicants based on hundreds of behavioral signals beyond traditional metrics.

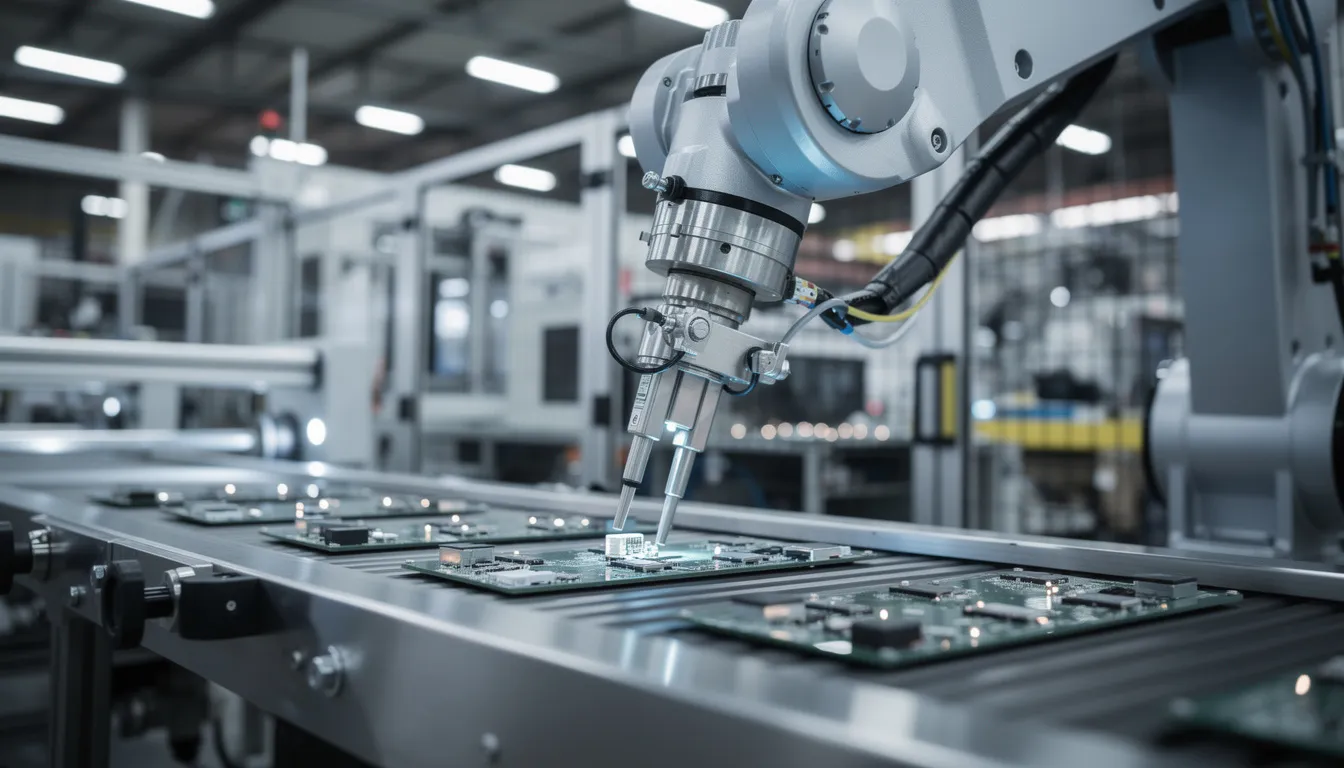

Manufacturing and Logistics

Predictive maintenance analyzes sensor data from machinery. GE reports 10-20% reductions in jet engine downtime using RNNs trained on terabytes of operational data.

Computer vision systems inspect production lines with 85% defect detection rates. Warehouse robotics optimized via reinforcement learning improve picking efficiency while adapting to changing inventory layouts.

Media and Personalization

Recommender systems on YouTube (2 billion users), Netflix, TikTok, and Spotify use deep learning on behavioral signals. YouTube’s deep bandit algorithms personalize 70% of watch time. Spotify’s transformer-based playlists improved listener retention by 30%.

These systems learn human cognitive processes indirectly-predicting what content will engage specific users based on patterns in past behavior.

Cybersecurity

Intrusion detection systems learn from historical attack patterns. Darktrace’s unsupervised “immune system” detects 400+ novel attacks daily using Bayesian models that identify abnormal network behavior.

Real-time anomaly flagging protects financial institutions, healthcare networks, and government systems from threats that traditional rule-based detection would miss.

Challenges, Risks, and Opportunities in Artificial Learning

The same machine learning techniques driving progress also create technical, social, and environmental challenges. Understanding these risks helps organizations deploy AI systems responsibly.

Bias and Fairness

Skewed training data encodes discrimination into models. The COMPAS recidivism tool showed 45% error rates for Black defendants versus 23% for white defendants in ProPublica’s 2016 analysis-a consequence of biased historical data from the 2010s.

Facial recognition systems trained primarily on lighter-skinned faces performed significantly worse on darker-skinned individuals. Mitigation requires fairness constraints during training (typically dropping accuracy 2-5% to achieve equalized odds) and careful auditing of data collected for training.

Privacy and Data Protection

Data-hungry models conflict with regulations like GDPR (2018) and the EU AI Act (political agreement 2023). Technical mitigations include:

Differential privacy adding calibrated noise during training (epsilon values of 1-10 balance utility with protection)

Federated learning keeping data on devices-Google Gboard trains across 1 billion+ users without centralizing data

Organizations must balance model performance against privacy obligations and user trust.

Security and Adversarial Attacks

Adversarial examples can fool ImageNet classifiers at 95% success rates using Projected Gradient Descent attacks. Model poisoning and backdoors pose risks in supply chains where models are trained on external data.

Defenses like adversarial training raise attack costs 10x but don’t eliminate risks entirely. Malware that evades detection and manipulated recommendation systems remain active threats.

Compute, Energy, and Concentration of Power

Training frontier models requires massive resources. GPT-4-class systems cost approximately $100 million in compute-equivalent to 10^25 operations-and consume energy rivaling 100,000 US homes annually.

This concentrates capabilities in a small set of tech giants and national labs. Per SemiAnalysis 2024 estimates, these organizations control 80%+ of top compute clusters. Smaller companies and researchers struggle to compete at the frontier.

Workforce and Economic Impact

McKinsey’s 2023 analysis found 45% of work activities are automatable with current technology, while 97 million new jobs in AI and data science could emerge by 2025. The transition requires reskilling programs-Coursera and Google certifications have enrolled over 1 million students.

New roles like prompt engineers, AI safety researchers, and responsible AI leads didn’t exist five years ago. Problem solving in this environment means adapting skills continuously.

Opportunities

Despite challenges, artificial learning offers transformative potential:

Climate modeling: GraphCast (2023) forecasts hurricane tracks with 97%+ accuracy

Drug discovery: AlphaFold accelerates timelines 10x; Insilico entered clinical trials in 18 months versus the typical 5 years

Productivity tools: Gen AI helps knowledge workers draft documents, analyze data, and code faster

Organizations that track meaningful developments-rather than drowning in hype-position themselves to capture these benefits. Curated information sources like KeepSanity AI help leaders focus on signal, not noise.

The Future of Artificial Learning

The period 2025–2030 looks like a phase of rapid integration rather than simply a race for bigger models. Understanding emerging trends helps organizations prepare for what’s coming.

Multimodal Integration

Models increasingly handle text, images, audio, video, and structured data together. GPT-4V (2023) accepts images alongside text. Gemini 2.0 reportedly handles 10 million tokens of context across modalities. These generative AI tools blur the lines between content types, enabling richer AI agents that understand the world more completely.

Efficient Models and On-Device AI

The massive resource requirements of frontier models have sparked a counter-movement toward efficiency. Microsoft’s Phi-3 mini (3.8B parameters) matches 13B-parameter models on benchmarks through superior data quality. Llama 3.2’s 1B and 3B variants run on smartphones at 40 tokens per second.

On-device processing addresses privacy concerns and reduces latency. Your phone may soon run capable AI models without cloud connectivity.

AI Agents and Agentic Workflows

Systems that chain together AI tools, browse the web, call APIs, and coordinate subtasks autonomously emerged from 2023 onward. Auto-GPT demonstrated early multi-step reasoning. OpenAI’s o1-preview (2024) achieved 83% on the ARC benchmark through test-time compute scaling.

These agents shift from single-query interactions to persistent problem solving across multiple tools and information sources.

Regulation and Governance

The EU AI Act (implementation 2024) prohibits high-risk real-time biometrics and mandates conformity assessments for general-purpose AI exceeding 10^25 FLOPs. US voluntary safety commitments in 2023 saw 11 major labs pledge transparency measures.

Expect increasing requirements for documentation, auditing, watermarking of generative outputs, and bias testing before deployment.

Human-AI Collaboration

The most productive framing positions artificial learning as a collaborative partner-augmenting creativity and decision-making rather than replacing human intelligence. Teams that incorporate AI tools effectively outperform both humans-only and AI-only approaches on most complex tasks.

Organizations who track signal, not noise, will adapt best to this evolving landscape.

How to Start Learning About Artificial Learning (for Professionals and Teams)

This section offers practical orientation for readers who want to understand and use artificial learning-not necessarily become full-time machine learning engineers.

Baseline Literacy Path

Start by understanding core AI concepts:

Supervised vs. unsupervised vs. reinforcement learning

Common model types (neural networks, decision trees, transformers)

Key limitations (data requirements, bias risks, uncertainty)

Accessible resources from 2018–2024 include Andrew Ng’s Coursera ML course (4M+ enrollees covering logistic regression through neural networks) and fast.ai’s practical deep learning curriculum (PyTorch-based, zero-to-hero in 7 weeks).

Technical Ramp-Up (6–12 Months)

For those building hands-on AI skills:

Phase | Focus | Duration |

|---|---|---|

1 | Python basics (NumPy, Pandas) | 1-2 months |

2 | Linear algebra, probability via MIT OCW | 2-3 months |

3 | Classical ML with scikit-learn | 2-3 months |

4 | Deep learning with PyTorch/HuggingFace | 3-4 months |

Understanding the programming language fundamentals and data science basics enables you to read research papers, evaluate vendor claims, and prototype solutions.

Non-Technical Professional Path (1–3 Months)

For product managers, executives, and domain experts:

Focus on AI product thinking and data literacy

Learn prompt engineering for generative AI models

Understand responsible AI principles and governance

Take short courses and internal workshops

You don’t need to train models to make informed decisions about AI applications in your organization.

Team Governance from Day One

Set up lightweight processes before projects scale:

Model documentation standards

Data access policies

Bias checks and fairness audits

Clear ownership of deployed systems

Starting with governance avoids painful rework as projects grow.

Staying Informed Without Overwhelm

The volume of AI news since 2022 has become unmanageable-100,000+ papers on arXiv AI annually, daily announcements, constant releases.

KeepSanity AI provides a weekly, no-ads briefing that selects only the most important developments in AI models, AI tools, regulation, and applications. It helps product leaders, researchers, and executives stay updated without daily inbox overload-covering business, models, robotics, and trending papers in scannable categories.

FAQ about Artificial Learning

Is artificial learning the same as artificial intelligence?

Artificial intelligence is the broader field of making computer systems perform tasks that normally require human intelligence-encompassing logic, rules, planning, and learning. Artificial learning refers specifically to the mechanisms by which those systems improve from data and experience. Most modern AI systems, from recommendation engines to chatbots, rely heavily on machine learning and deep learning, which are the most successful forms of artificial learning today. Some AI techniques, like older rule-based expert systems from the 1980s, used little or no learning at all-showing that AI and artificial learning are related but not identical concepts.

Can artificial learning systems really learn on their own?

Many systems adapt autonomously once deployed. Models update with new data every night, reinforcement learning agents adjust strategies based on real-time feedback, and recommendation systems personalize continuously. However, humans still define the goals, reward functions, data pipelines, and constraints. The learning operates within boundaries that engineers establish. Fully autonomous self-improvement beyond predefined bounds remains a research topic-not how mainstream commercial systems in 2025 actually operate.

How long does it take for a company to build useful artificial learning applications?

Timelines vary dramatically based on scope and data readiness. A simple predictive machine learning model trained on clean historical data can often be prototyped in weeks. Large-scale, safety-critical systems like medical diagnostics or autonomous vehicles require years of development and validation. Data readiness is usually the bottleneck-organizations often spend months cleaning and integrating unseen data before serious training begins. Starting with small, well-scoped projects like churn prediction or internal document search can deliver value within 3–6 months while building organizational confidence.

What skills do I need to work with artificial learning if I’m not a programmer?

Non-technical contributors add value through problem definition, data understanding, evaluation criteria, and ethical oversight. Valuable roles include AI product manager, domain expert annotator, responsible AI lead, and operations specialist who monitors deployed systems. Building comfort with basic statistics, data visualization tools like those in Google Workspace, and AI terminology enables effective collaboration with technical teams. You don’t need to code models yourself to make informed decisions about machine learning systems in your organization.

How can I keep up with rapid changes in artificial learning without getting overwhelmed?

The volume of AI news and research since 2022 has become genuinely overwhelming, with dozens of papers, releases, and announcements every week. The solution is a focused information diet: a small number of high-signal newsletters, a few trusted blogs or podcasts, and perhaps quarterly deep dives on topics relevant to your work. KeepSanity AI exemplifies this approach-a once-per-week, curated update with zero ads that selects only the most important developments across models, tools, regulation, and applications. This helps busy professionals stay informed without drowning in daily inbox overload or sacrificing their sanity to FOMO.