AI Train: How Modern AI Models Learn, Improve, and Power Real Products

Introduction

Welcome to your comprehensive guide on the “AI train” - the process by which modern AI models learn, improve, and ultimately power real-world products. Whether you’re a business leader seeking to leverage AI for competitive advantage, an engineer building intelligent systems, or a general reader curious about the technology shaping our future, this article is for you.

Right from the first paragraph, we’ll clarify what “AI train” means and why understanding AI model training is crucial. In today’s rapidly evolving landscape, knowing how AI models are trained helps you make informed decisions about deploying, evaluating, or investing in AI solutions. This guide covers the full scope: from foundational concepts and training methods to practical business strategies, costs, and future trends. By the end, you’ll understand not just the “how,” but also the “why” behind AI model training - and how it impacts accuracy, safety, and business value.

Key Takeaways

“AI train” refers to the process of training AI models on data using methods like supervised, unsupervised, reinforcement, and transfer learning to recognize patterns and make accurate predictions.

Training shapes everything about an AI system: accuracy, bias, safety, speed, costs, and environmental impact-making it the single most important phase in building AI products.

High-quality, curated training data (not just massive datasets) is the core differentiator between mediocre and elite AI models in 2024–2026.

Businesses rarely train gigantic models from scratch; instead, they fine tune pre-trained models (like GPT-style LLMs or vision models) on their domain data to save months of compute time and millions in costs.

KeepSanity AI helps readers stay sane amid the rapid evolution of training techniques by curating only the most important weekly AI news and research-no daily filler, no sponsor noise.

What Does It Mean to “Train” an AI?

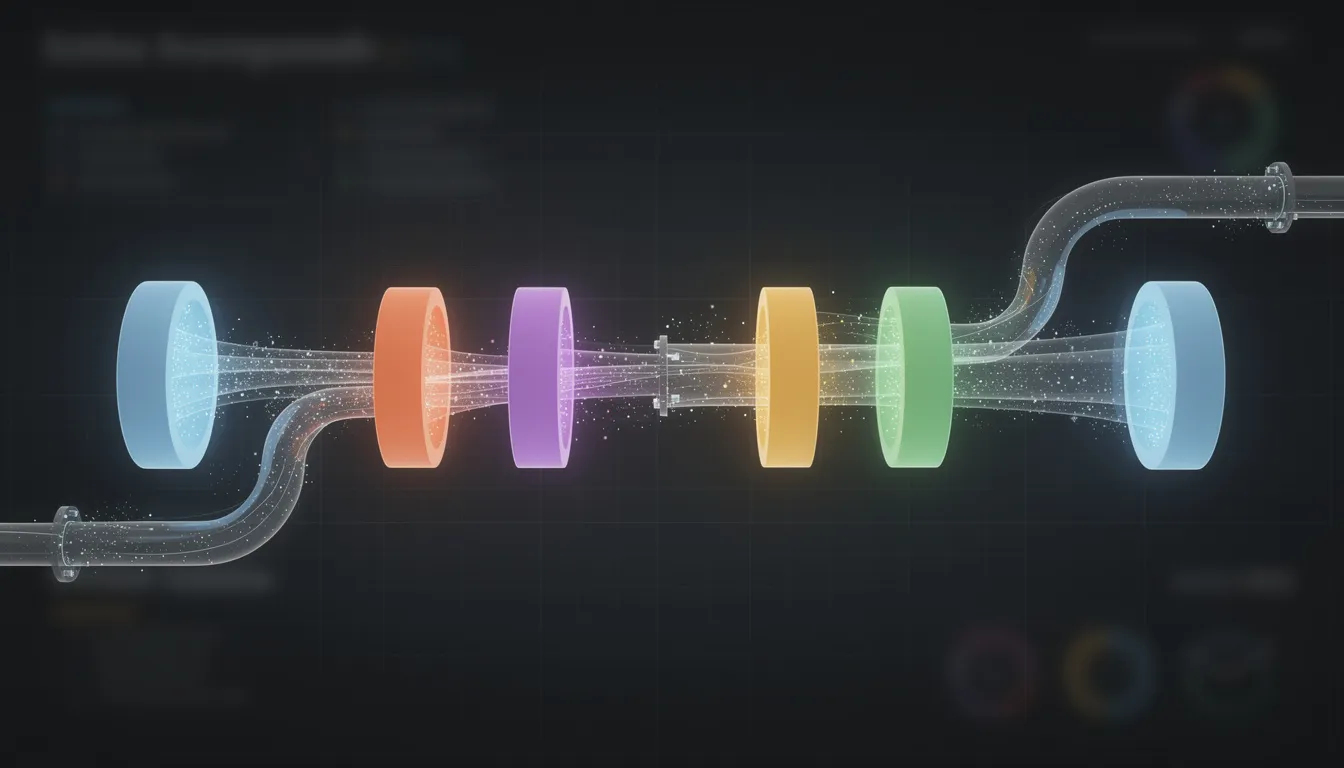

AI model training is the process of creating a custom, intelligent tool that analyzes and interprets vast amounts of data. When people say “AI train,” they’re referring to the process of teaching an AI model to recognize patterns and make predictions by exposing it to large datasets and adjusting its internal parameters (called weights). Think of it like teaching a new employee: you show them thousands of examples, give them feedback on their mistakes, and over time they get better at the job.

An AI model is essentially a mathematical function-often containing millions or billions of parameters-that takes inputs (text, images, audio, or tabular data) and produces outputs (predictions, labels, or generated content). During training, the model repeatedly processes examples and receives feedback, automatically adjusting itself to reduce errors over many training cycles called epochs.

Key components of AI training include data curation, model architecture selection, and evaluation metrics. These elements work together to ensure the AI model learns effectively from raw information, achieves high accuracy, and meets the intended business or technical goals.

Here’s what happens during the AI model training process:

Data ingestion: The model receives batches of training data (historical examples with known outcomes).

Prediction: The model makes predictions based on its current parameters.

Error calculation: A loss function measures how far off the predictions were from the correct answer.

Parameter update: Gradient descent and backpropagation adjust the model’s weights to reduce future errors.

Iteration: This iterative process repeats across the entire dataset, often multiple times.

One example from 2024: a fraud detection model trained on millions of historical credit card transactions labeled “fraud” or “legit.” After extensive data cleaning and feature engineering, such models routinely achieve validation accuracies exceeding 95%, enabling banks to flag anomalies in real time before customers even notice suspicious activity.

Why AI Training Matters for Real-World Applications

AI training isn’t an abstract academic exercise-it’s what makes your Netflix recommendations eerily accurate, your translation apps nearly human-quality, and your coding assistant surprisingly helpful. The quality of training directly determines whether an AI tool actually works in real world scenarios or falls flat.

Product reliability depends on training: Whether an AI can safely power customer support chatbots, medical triage assistants, or financial risk models comes down to how well it was trained. Poor training means poor predictions, false positives, and eroded user trust.

Massive improvements between 2022–2025: The leap from early clumsy chatbots to systems like GPT-4, Claude, and Gemini happened because of training innovations-better data curation, larger models, smarter optimization, and techniques like RLHF that align model output with human preferences.

Measurable business impact: A retailer training a recommendation model on purchase histories and clickstream data can increase average order value by 15-20%. A well-trained recommendation engine is credited with boosting Netflix retention by 75% through personalized content suggestions.

Customer behavior insights: Training AI models on real customer interactions helps marketing teams understand preferences, predict churn, and personalize experiences at scale-transforming raw data into actionable intelligence.

From the KeepSanity perspective: you don’t need to follow every training paper published on arXiv daily. Staying updated on training breakthroughs once a week is enough to inform strategy without drowning in the noise that plagues most AI newsletters.

Core Concepts: Models, Data, and Learning Signals

Before diving into specific training methods, let’s clarify the building blocks: the model architecture, the training data, and the learning signal (also called the loss function or feedback mechanism). Understanding these three pillars helps you grasp how AI learns from raw information.

Model architecture: This is the structure of the neural networks or algorithms that process inputs. Transformers dominate language and vision tasks (handling 1M+ token contexts via self-attention), convolutional neural networks excel at image classification with hierarchical feature extraction, and gradient-boosted decision trees (like XGBoost) rule tabular predictions with speed and interpretability. Architecture choice depends on your modality-text favors transformers, images favor CNNs or Vision Transformers, control tasks favor recurrent or RL policies.

Training data: Historical examples that the model learns patterns from. This includes email messages labeled “spam” vs “not spam,” radiology images with expert diagnoses, code repositories with comments, or customer transaction histories. Data scientists typically split datasets into 60-80% training, 10-20% validation, and 10-20% test sets to prevent leakage and ensure honest evaluation.

Learning signal: The mechanism telling the model how wrong it was. Loss functions like cross-entropy (for classification), MSE (for regression), or next-token prediction (for LLMs) quantify errors. Optimizers like AdamW use gradient descent and backpropagation to adjust weights, nudging the model toward better performance without requiring manual intervention.

A concrete 2023-2025 LLM example: during pretraining, a large language model predicts the next token in trillions of text sequences. The loss measures how often it guessed the wrong word, with perplexity dropping from 20+ to under 5 as training progresses-establishing the solid foundation for downstream tasks like chat, code generation, and analysis.

Major AI Training Methods

Modern AI systems like large language models and recommender engines combine multiple training paradigms: supervised, unsupervised, self-supervised, reinforcement, transfer, and semi-supervised learning. Understanding when to use each method is crucial for training AI effectively.

Method choice depends on context: The availability of labeled data versus unlabeled data, the problem type (prediction, generation, control), and your compute budget all influence which approach makes sense.

Landmark models blend multiple methods: Systems launched between 2018–2025-BERT, GPT-3, GPT-4, Gemini, Claude-rely heavily on self-supervised or unsupervised pretraining on massive datasets, followed by supervised and reinforcement fine-tuning to improve output accuracy and alignment.

Hybrid pipelines are the norm: Rather than choosing a single method, production systems typically pretrain on vast amounts of unlabeled text, then fine tune with labeled examples, and finally polish with RLHF to match human expectations.

The following sections break down each major training method with concrete examples, so you can understand which approaches fit different scenarios.

Supervised Learning

Supervised learning trains on labeled examples where both input and desired outputs are known-like “image → dog/cat” or “transaction → fraud/not-fraud.” This is the workhorse method for many production AI systems.

How it works: During training, the model compares its prediction to the ground-truth label and updates parameters to minimize error across the dataset. The iterative process continues until the model’s predictions converge on acceptable accuracy.

Concrete examples: Medical image classification (lung X-rays labeled by radiologists achieving 95% sensitivity), spam detection in emails (Gmail’s 99.9% efficacy), sentiment analysis of customer reviews that can boost NPS by 5-10 points, and demand forecasting at Walmart-scale operations.

Production staples from 2010–2024: Credit scoring models with 90%+ AUC, churn prediction systems reducing attrition by 10-15%, logistic regression models for customer segmentation, and classification systems across finance and healthcare all use supervised learning as their foundation.

The catch: Labeling costs matter. At $0.01-1 per example, supervised learning doesn’t scale infinitely-which is why other methods emerged to leverage unlabeled data.

Unsupervised and Self-Supervised Learning

Unsupervised learning uses unlabeled data to discover structure (clusters, anomalies), while self-supervised learning creates pseudo-labels from raw data itself-like masking words in a sentence and asking the model to fill in the blanks.

Clustering and dimensionality reduction: Unsupervised tasks power customer segmentation (grouping users into 5-10 personas via k-means), anomaly detection (isolation forests flagging 1-5% outliers), and exploratory analysis using techniques like PCA and t-SNE for visualization.

Self-supervised pretraining at scale: This is how transformers learn. BERT masks 15% of text tokens and predicts them; GPT models predict the next token. Models train on massive corpora like Wikipedia, Common Crawl, and code repositories-billions of examples without manual labeling.

Vision example: A vision transformer trained in 2021-2024 on billions of unlabeled images by predicting missing patches, then fine-tuned for image data classification tasks, can slash labeled data requirements by 90% while maintaining competitive accuracy.

Foundation for modern AI: Self-supervised pretraining is what makes generative AI and natural language processing systems possible at scale. Without it, we’d need impossibly large labeled datasets for every application.

Reinforcement Learning and RLHF

Reinforcement learning (RL) trains by trial and error: the model chooses actions and receives rewards or penalties, gradually learning a policy that maximizes long-term reward. It’s how AI works in environments where the correct answer isn’t immediately obvious.

Classic RL examples: Game-playing AIs like AlphaGo and AlphaZero trained between 2016–2019 by playing 4.9 million games against themselves, achieving superhuman performance at Go and chess. Robotics control policies learn to walk or grasp objects through millions of simulated trials before deployment.

RLHF for language models: Reinforcement Learning from Human Feedback uses human raters who rank multiple candidate responses. A reward model learns what humans prefer, then optimizes the base model via algorithms like PPO to system refine its outputs.

Impact on conversational AI: RLHF was the key technique behind ChatGPT’s launch in November 2022 and subsequent LLMs through 2023-2025. It’s credited with reducing toxic outputs by 50-70% and dramatically improving helpfulness.

Alignment and risks: RLHF connects to user experience and safety-better alignment with human expectations, reduced off-topic or harmful outputs. But it can also encode rater biases or cause mode collapse if not carefully managed. AI trainers and researchers continue refining these techniques.

Transfer Learning and Fine-Tuning

Transfer learning reuses a model trained on a large, general dataset and adapts it to a narrower task using far less data and compute. This is how most organizations actually do AI model training today.

Concrete examples: Reusing an ImageNet-pretrained CNN for classifying specific medical images (cutting error rates 10-20%), or fine-tuning an LLM like Llama on a company’s internal documentation to create a private support assistant that understands domain-specific terminology.

Fine-tuning mechanics: You update some or all of the model’s weights on the new data. Parameter-efficient techniques like LoRA (low-rank adapters) update less than 1% of parameters, enabling fine-tuning on consumer GPUs in hours rather than requiring millions for scratch training.

Cost advantages: Fine-tuning a mid-sized open model takes hours on 4-8 cloud GPUs instead of months of pretraining. This slashes costs 100-1000x while retaining 90%+ of the base model’s generalization capabilities.

The practical path: For most business applications, transfer learning is the answer. You don’t need to train models from scratch unless you have highly specialized needs, regulatory requirements, or data that can’t leave your infrastructure.

Semi-Supervised Learning

Semi-supervised learning combines a small set of labeled examples with a large set of unlabeled data to improve performance when labels are expensive or scarce.

Use cases: Email classification where only a fraction of messages are manually labeled, niche language tasks where labeling costs run $1K+ per task, or specialized image classification where expert annotators are limited.

Techniques: Pseudo-labeling (iteratively labeling confident predictions and adding them to training), consistency regularization (ensuring the model produces stable predictions under small perturbations), and co-training with multiple views of the data.

Quiet workhorse in production: Many 2022–2025 workflows in computer vision and natural language processing quietly use semi-supervised techniques to stretch limited labeled datasets, often boosting performance by 5-15% over purely supervised approaches.

The AI Training Pipeline: From Idea to Deployed Model

Training an AI model follows a lifecycle: defining the problem, collecting and preparing data, choosing a model, training, evaluation, and deployment with ongoing improvement. Understanding this pipeline helps you avoid costly missteps and wasted compute.

Problem definition is critical: Rushed or vague definitions waste 50%+ of compute time and lead to models that solve the wrong problem.

Data preparation dominates effort: Expect 70-80% of your time to go into sourcing, cleaning, and labeling relevant data-not running algorithms.

Iteration is inevitable: The model training process rarely succeeds on the first attempt; expect to cycle through evaluation and refinement multiple times.

Define the Problem and Success Metrics

AI training should begin with a narrowly scoped question, not a vague aspiration. “Reduce average support response time by 30% using a triage model by Q4 2026” is actionable. “Make our AI better” is not.

Clarify inputs and outputs: What data will the model see (past support tickets, customer profiles, transaction histories)? What must it predict or generate (priority class, suggested reply, routing decision, risk score)?

Specify measurable metrics: Accuracy, F1-score, latency under 200ms, cost per prediction, or business KPIs like revenue lift, churn reduction, or fraud detection rate improvements.

Include regulatory and ethical constraints: Especially in finance, healthcare, and hiring, define what the model cannot do. Privacy requirements, fairness standards, and compliance obligations should shape the problem from day one.

Real-world examples: A fintech company defining a fraud detection system needs clear thresholds for flagging versus blocking transactions. An e-commerce platform building a recommendation engine needs to balance personalization against diversity and fairness.

Collect, Clean, and Prepare Training Data

In practice, most AI training time goes into acquiring, cleaning, and labeling data-not configuring neural networks or tuning hyperparameters.

Sourcing data: Pull from logs, CRM systems, public datasets (Kaggle, Hugging Face), or vendor-provided datasets. Check licenses and privacy obligations (GDPR, CCPA) before using any data.

Cleaning steps: Deduplicate records, handle missing values, normalize formats, remove obviously corrupted or out-of-scope examples. Quality data beats quantity-a well-curated 1M sample dataset often outperforms 100M noisy examples.

Annotation workflows: Use internal experts for high-stakes labeling (medical imaging, legal documents), crowdsourcing platforms for scale, or specialized data providers for tasks like entity tagging, sentiment scoring, or output rating.

Dataset splits: Divide into training (60-80%), validation (10-20%), and test (10-20%) sets. This prevents data leakage and ensures you’re evaluating on examples the model has never seen during training.

Choose an Architecture and Training Setup

Model choice depends on the task, data scale, latency requirements, interpretability needs, and available compute. There’s no universal best model-only the right data and architecture for your specific problem.

Practical mappings:

Tabular business data → gradient-boosted trees (XGBoost, LightGBM) or feedforward networks

Images → CNNs or Vision Transformers

Text → Transformers / LLMs

Control and robotics → RL policies or recurrent architectures

Use pre-trained backbones: For most modern projects, start with models from Hugging Face or proprietary APIs rather than training from scratch. Open models like Llama, Mistral, or BERT variants provide strong starting points.

Infrastructure decisions: Single GPU vs multi-GPU, on-premises vs cloud services (AWS SageMaker, Google Vertex AI, Azure ML). Monitor costs carefully-GPU time adds up fast.

Run Training and Monitor Learning

During training, data is fed in batches, the model makes predictions, calculates loss against labels, and updates weights via backpropagation. This is where compute resources get consumed.

Epochs and duration: An epoch is a full pass over the dataset. Modern large-scale AI models may train for days or weeks on GPU clusters, while smaller business models might train in hours on modest hardware.

What to monitor: Training and validation loss curves (divergence signals overfitting), learning rate schedules, hardware utilization (GPU memory, compute efficiency), and checkpoint performance.

Generalization techniques: Early stopping (halt when validation loss stops improving), regularization (dropout, weight decay), and data augmentation expand your effective dataset and prevent memorization.

Dashboard focus: Teams typically watch live metrics during an AI training run-loss trajectories, gradient magnitudes, learning rate adjustments-to catch problems before wasting hours of compute.

Evaluate, Stress-Test, and Iterate

Evaluation must go beyond “headline accuracy” to include robustness, fairness, safety, and performance on real-world edge cases. A model that looks good on average data might fail catastrophically on minority groups or unusual inputs.

Challenge sets: Beyond the held-out test set, create targeted datasets probing specific weaknesses-edge cases, minority demographics, rare scenarios, adversarial inputs.

Standard metrics by task:

Classification: ROC-AUC, precision, recall, F1

Language generation: BLEU, ROUGE, perplexity

Regression: Mean absolute error, RMSE

Ranking: NDCG, mean reciprocal rank

Human-in-the-loop evaluation: For generative AI, have reviewers rate quality, helpfulness, and safety. Automated metrics don’t capture everything humans care about.

Iterate based on results: Adjust data (more examples, better labels), model architecture, or training hyperparameters and retrain. The training process is rarely one-and-done.

Deployment and Continuous Training

Once the model meets criteria, deployment begins via APIs, batch jobs, or edge devices. But training effectively continues after launch as the real world reveals new challenges.

Monitor for drift: Input data distributions change over time. Set up alerts for performance regression, unusual input patterns, and user feedback that suggests the model is struggling.

Periodic retraining: Especially in fast-moving domains like cybersecurity, e-commerce, or social media, schedule regular fine-tuning with fresh data to keep the model current.

A/B testing: Deploy new model versions alongside existing baselines to measure real business impact before full rollout. Let metrics-not intuition-guide the decision.

Connection to staying informed: Because the field evolves weekly, keeping up with training best practices via a concise newsletter like KeepSanity helps teams avoid outdated patterns and adopt proven improvements.

The Critical Role of Training Data Quality

Why Data Quality Matters

Data quality-relevance, coverage, correctness, and lack of bias-matters more than sheer dataset size for most AI training efforts. Throwing more data at a problem rarely fixes fundamental data quality issues.

Poor data leads to brittle models: Biased training data produces biased predictions. Incomplete data creates blind spots. Noisy labels teach the wrong patterns.

Concrete problems: Mislabeled medical images risking misdiagnosis, biased hiring data reinforcing discrimination (like Amazon’s 2018 resume screener that penalized women), skewed financial histories mis-pricing risk for certain demographics.

High-profile controversies: NIST tests on facial recognition showed 10-100x error rate disparities for darker skin tones compared to lighter skin, traced directly back to imbalanced training datasets. These failures prompted regulatory scrutiny and public backlash.

Best Practices for Data Curation

Diverse sampling across demographics and use cases

Debiasing strategies (adversarial training, reweighting)

Rigorous annotation guidelines

Ongoing audits of both datasets and model outputs

Many organizations now require fairness audits before deployment.

Costs, Infrastructure, and Environmental Impact of AI Training

Training state-of-the-art AI models is resource-intensive: expensive GPUs and TPUs, massive energy consumption, and significant human labor for annotation and alignment. Understanding these infrastructure requirements helps you budget realistically.

Resource Type | Frontier LLM Training | Mid-Size Model | Small Business Model |

|---|---|---|---|

Compute Cost | $10-100M | $10K-1M | $100-10K |

Training Time | Weeks-months | Days-weeks | Hours-days |

GPU Count | 10,000+ H100s | 8-100 GPUs | 1-8 GPUs |

Data Prep Time | Months | Weeks | Days |

Hardware backbone: NVIDIA A100/H100 GPUs (delivering 2K+ TFLOPS), Google TPUs (v5e offers 4x cost efficiency for certain workloads), and cloud ML platforms handle most industrial-scale training jobs.

Environmental footprint: Training a large LLM can emit 626,000 lbs of CO2 (equivalent to five cars’ lifetime emissions) and consume 700,000 liters of water for cooling. This has sparked the “green AI” movement toward more efficient architectures.

Human labor costs: Annotation runs $0.05-0.50 per example depending on complexity. RLHF requires human raters-often globally distributed-raising ethical concerns about fair compensation and working conditions.

Practical implications: Most businesses opt for fine-tuning pre-trained models or using AI tools via API access, avoiding the prohibitive costs of training from scratch. This “don’t reinvent the wheel” approach makes AI accessible to many organizations without massive budgets.

Business Playbook: When (and How) Your Company Should Train AI

AI training decisions are strategic: what to buy, what to fine tune, and what (if anything) to build from scratch based on your size, data assets, and risk profile.

Three broad options:

Approach | Best For | Cost Range | Technical Expertise Required |

|---|---|---|---|

SaaS/AI APIs | Startups, rapid prototyping | $/month subscription | Low |

Fine-tune open/commercial models | Mid-size enterprises with domain data | $1K-100K | Medium |

Build custom from scratch | Regulated industries, unique requirements | $1M+ | High |

Example decision patterns: A startup uses OpenAI or Anthropic APIs to move fast without AI skills investment. A mid-size enterprise fine-tunes Llama or Mistral on internal documentation for a private assistant. A major bank funds bespoke models for compliance, data residency, and competitive advantage.

Governance essentials: Model documentation (model cards), internal “AI centers of excellence,” clear ownership across data, engineering, and legal teams. Auditability matters increasingly as regulations tighten.

Staying informed strategically: A weekly, noise-filtered newsletter like KeepSanity helps leaders track only the pivotal developments in training techniques, regulations, and ai tools that might justify revisiting their AI strategy-without the FOMO and burnout of daily updates.

Future Trends in AI Training (2025–2030)

Based on 2023–2025 research trajectories, here’s where AI training is heading over the next five years-grounded in observable developments, not speculation.

More efficient architectures: Sparse models and mixture-of-experts (like Mixtral 8x7B activating only 12B of its parameters per forward pass) reduce compute per unit of capability by 80-90%, making powerful models more accessible.

Synthetic data at scale: Generating training data synthetically to augment or replace scarce real data is growing, but requires careful validation to avoid feedback loops and artifacts. The scaling laws appear to hold when synthetic data is well-validated.

Regulatory mandates: The EU AI Act and emerging global frameworks are beginning to require transparency around training data provenance, evaluation methodology, and risk management for high-stakes AI systems.

Continual learning: More organizations will move toward setups where models receive frequent small updates from streaming data rather than infrequent, massive retrains-reducing staleness and improving responsiveness to new data and customer behavior patterns.

Alignment advances: Techniques like Direct Preference Optimization (DPO) are showing efficiency gains over RLHF, making alignment more accessible. Open-source LLMs (7B-405B parameters) are democratizing access to capable base models.

FAQ: AI Training in Practice

How long does it take to train an AI model?

Timeline varies enormously. A small tabular model using scikit-learn or XGBoost might train in minutes or hours on a laptop. Mid-sized language or vision models can take days on cloud GPUs. Frontier models with hundreds of billions of parameters require weeks of continuous training on large GPU clusters, plus months of preparation and tuning.

For typical business use cases, the time-consuming part is usually data preparation and evaluation (often 70%+ of total project time), not the raw training step itself. Start training once your data pipeline is solid, not before.

Do I need a PhD to train useful AI models?

No. A PhD is not necessary to train models for most practical business applications. Strong software engineering and data skills plus good learning resources are often sufficient for production-ready models.

Modern AI tools-managed notebooks, AutoML platforms, low-code ML services-make basic experimentation accessible to analysts and engineers without deep ML backgrounds. That said, highly novel research, large-scale distributed training, and cutting-edge alignment work still require deep expertise in machine learning, optimization, and systems engineering.

What tools and frameworks are commonly used for AI training?

The mainstream ecosystem includes:

Deep learning frameworks: PyTorch (dominates ~70% of research), TensorFlow/Keras (strong in enterprise), JAX (growing in research)

Classic ML: scikit-learn, XGBoost, LightGBM for tabular data and traditional machine learning tasks

Cloud platforms: AWS SageMaker, Google Vertex AI, Azure ML for managed training at scale

Model hubs: Hugging Face for sharing pre-trained models and datasets (500K+ models available)

Experiment tracking: Weights & Biases, MLflow for monitoring training runs and reproducing results

Start with widely adopted, well-documented tools. Niche frameworks often lack community support when you hit problems.

Can AI be trained effectively with small datasets?

Yes, for many tasks. Small but high-quality datasets work well when you leverage transfer learning from large pre-trained models. Fine-tuning a pre-trained LLM or vision model on just hundreds or thousands of domain-specific examples often achieves strong results.

Techniques that help with limited data include data augmentation (creating variations of existing examples), semi supervised learning (using unlabeled data to boost performance), and careful regularization to prevent overfitting. In extremely data-poor settings, invest more in expert labeling, synthetic data generation through prompt engineering, or re-scoping the problem to match available data.

How can I keep up with rapid changes in AI training without getting overwhelmed?

AI training research and tools evolve weekly. Trying to follow every paper on arXiv or respond to every daily newsletter quickly leads to burnout-we’ve been there.

A curated, low-noise approach works better: follow a few trusted researchers, subscribe to weekly digests that filter signal from noise, and set aside a fixed short time each week to review updates. KeepSanity AI does exactly this-one email per week with only the major developments in models, training techniques, tools, and regulation. No daily filler, no sponsored padding, just the full range of what actually matters.

Lower your shoulders. The noise is gone. Here is your signal.

Ready to stop drowning in AI news? Subscribe to KeepSanity AI for a weekly digest covering business updates, model releases, ai skills resources, trending papers, and the tools that matter-curated for people who need to stay informed without letting newsletters steal their sanity.